4.1 Ollama framework

Ollama is an open-source framework designed to run Large Language Models (LLMs) locally and privately on macOS, Windows, and Linux. The Metis hardware allows running large LLMs, such as 70B-class models (e.g., Llama 3 70B) in 4-bit quantization, or even larger models, with up to 192 GB VRAM consumption. The model list and documentation are available via the Ollama Model Library.

A dedicated AI server node, llm1, with two RTX6000 Pro GPU cards, each with 96 GB of RAM, has been added to the metis cluster. The server is dedicated to running AI models using the Ollama framework via interactive batch jobs and the WebUI interface. Additionally, all compute nodes can run smaller Ollama models at A100 Nvidia GPUs (40 GB VRAM).

4.2 Ollama environment modules

We provide two environment modules to set up Ollama.

module load ollama/ollama-llm1

The ollama-llm1 module enables access to the olama server running on the llm1 node equipped with several popular AI models, including llama3, qwen3, gpt-oss, phi4, deepseek, e.t.c. Use 'ollama list.' command to display the local list, and the https://ollama.com/search page to list models available at the ollama website. Any model from these lists can be used via the 'ollama run' command and will be stored in the /lstr/sahara/ollama/ollama directory. The disk space consumed is accounted for against the special "ollama" group account.

module load ollama/ollama-latest

This module provides an environment for a personal ollama server, which must be started via ollama serve&' command. The used models will be stored under the /lstr/saharaPrimaryGroup/ollama/UserName, and the consumed disk space is accounted against the user's primary group account (use 'id -gn' and 'id -un' commands at metis to find out your primary group and username).

4.3 Ollama interactive jobs

- Regular compute node jobs

Ollama interactive jobs that use personal Ollama servers can be started on any regular compute nodes via the standard job submission procedure. For example, to reserve one GPU, 16 CPUs, and 128GB RAM on any of the cn01-cn32 nodes, one can use

-

qsub -I -l select=1:ngpus=1:ncpus=16:mem=128gb,walltime=02:00:00

followed by the

-

-

- [user@cnXX ~]$

module load ollama/ollama-latest - [user@cnXX ~]$

ollama serve & - [user@cnXX ~]$

ollama list - [user@cnXX ~]$

ollama run model-name

- [user@cnXX ~]$

-

- Dedicated AI node jobs

These jobs assume the use of the ollama/ollama-llm1, and can be started as

-

qsub -I -q qllm -l select=1:ncpus=16:mem=128gb,walltime=02:00:00

followed by the

-

-

- [user@cnXX ~]$

module load ollama/ollama-latest - [user@cnXX ~]$

ollama list - [user@cnXX ~]$

ollama run model-name

- [user@cnXX ~]$

-

4.4 WebUI interface

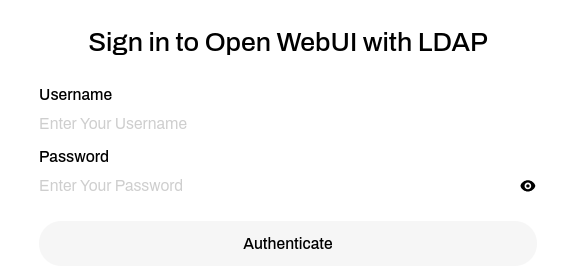

All active Metis users can use the Ollama Web interface at https://vmlab.niu.edu. NIU VPN is required for out-of-campus access. To access, sign in using the metis credentials:

The site provides immediate access to the following popular models:

- General Purpose

- Coding

- Multimodal/Vision Models

Additionally, any Ollama-compatible model can be added to the WebGUI upon request to crcdhelpdesk@niu.edu.